Media Summary: Ready to become a certified watsonx Generative In this deep dive, we'll explain how every modern Large Language Model, from LLaMA to GPT-4, uses the KV Try Voice Writer - speak your thoughts and let

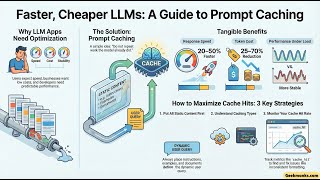

What Is Prompt Caching Optimize Llm Latency With Ai Transformers - Detailed Analysis & Overview

Ready to become a certified watsonx Generative In this deep dive, we'll explain how every modern Large Language Model, from LLaMA to GPT-4, uses the KV Try Voice Writer - speak your thoughts and let Connect with me ▭▭▭▭▭▭ LINKEDIN ▻ / trevspires TWITTER ▻ / trevspires In this 7-minute tutorial, discover how to ... Disclaimer: This video is generated with Google's NotebookLM. In this engineering deep dive, we explore how

Local inference capable LLMs are getting smarter and faster, but there's one critical capability that must work correctly to get the ... Title: Attention Once Is All You Need: Efficient Streaming Inference with Stateful