Media Summary: Splitting the Difference on Adversarial Training Matan Levi and Aryeh Kontorovich, Ben-Gurion University of the Negev The ... Unveiling the Secrets without Data: Can Graph SecurityNet: Assessing Machine Learning Vulnerabilities on Public Models Boyang Zhang, Zheng Li, Ziqing Yang, Xinlei He, ...

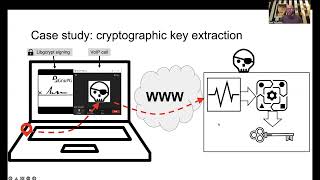

Usenix Security 24 Sok Neural Network Extraction Through Physical Side Channels - Detailed Analysis & Overview

Splitting the Difference on Adversarial Training Matan Levi and Aryeh Kontorovich, Ben-Gurion University of the Negev The ... Unveiling the Secrets without Data: Can Graph SecurityNet: Assessing Machine Learning Vulnerabilities on Public Models Boyang Zhang, Zheng Li, Ziqing Yang, Xinlei He, ... Exploring Connections Between Active Learning and Model Scalable Multi-Party Computation Protocols for Machine Learning in the Honest-Majority Setting Fengrun Liu, University of ... ClearStamp: A Human-Visible and Robust Model-Ownership Proof based on Transposed Model Training Torsten Krauß, Jasper ...

Tossing in the Dark: Practical Bit-Flipping on Gray-box Deep