Media Summary: Qwen3 Huggingface link: Learn how to start up a In this video, we walk through how to deploy a fine-tuned large language model from Hugging Face to a Join the Community: Start Building Smarter Systems NOW Ready to automate ...

Runpod Flash Tutorial Serverless Gpu With Just Python - Detailed Analysis & Overview

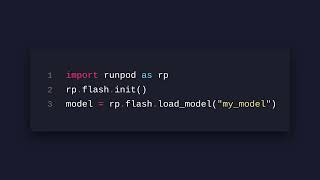

Qwen3 Huggingface link: Learn how to start up a In this video, we walk through how to deploy a fine-tuned large language model from Hugging Face to a Join the Community: Start Building Smarter Systems NOW Ready to automate ... Running the latest AI models like ComfyUI, Stable Diffusion, and custom LoRAs doesn't have to require a super expensive Timestamps: 00:00 - Intro 01:06 - Account Creation 03:25 - Pod Overview 05:52 - Pod Setup Part 1 06:56 - SSH Setup 09:35 - Pod ... I explain the ending of exponential computing power growth and the rise of application-specific hardware like

Want to deploy Large Language Models (LLMs) on