Media Summary: Learn about watsonx: Large language models ( Ready to become a certified GenAI engineer? Register now and use code IBMTechYT20 for 20% off of your exam ... Artificial Intelligence is evolving rapidly, but many people are confused about the difference between Large Language Models ...

Llm Vs Rag Explained Simply Why Llms Hallucinate How Rag Fixes It - Detailed Analysis & Overview

Learn about watsonx: Large language models ( Ready to become a certified GenAI engineer? Register now and use code IBMTechYT20 for 20% off of your exam ... Artificial Intelligence is evolving rapidly, but many people are confused about the difference between Large Language Models ... Large language models don't always know the latest In this video we will discuss about what is Ready to become a certified watsonx AI Assistant Engineer? Register now and use code IBMTechYT20 for 20% off of your exam ...

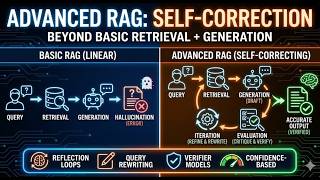

Get the interactive demo → Learn about the technology → Oftentimes, GAI and ... Get the guide to GAI, learn more → Learn more about the technology → Join Cedric ... Welcome to the first video in our new series on Retrieval-Augmented Generation ( Want to learn more about Want to learn more about Generative AI + Machine Learning? Read the ebook here ... Checkout my Job Ready Courses: Data Analytics Course: ... Hello, beautiful souls. Today, we're taking a walk into the strange and fascinating world of AI

What is RAG (Retrieval Augmented Generation) and why is it important for developers? In this video, we explain RAG in a ...