Media Summary: This tutorial shows you how to turn real user data into Want to learn real AI Engineering? Go here: Want to start freelancing? Let me help: ... In this AI Research Roundup episode, Alex discusses the paper: 'CLEAR:

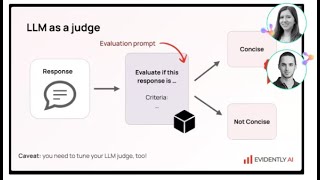

Llm Evaluation In Practice Error Analysis And Reliable Agent Testing - Detailed Analysis & Overview

This tutorial shows you how to turn real user data into Want to learn real AI Engineering? Go here: Want to start freelancing? Let me help: ... In this AI Research Roundup episode, Alex discusses the paper: 'CLEAR: For more information about Stanford's graduate programs, visit: November 21, ... Join the AI Evals September 2026 cohort: . Hamel talks with Ali ... Ready to become a certified watsonx AI Assistant Engineer? Register now and use code IBMTechYT20 for 20% off of your

Discover cutting-edge methodologies for comprehensive In this webinar, we heard firsthand about the challenges and opportunities presented by In this Open-Source Spotlight interview, Rogerio Chavez, co-founder of LangWatch, introduces Scenario — an open-source